Table of Contents

Welcome to the CDS Handbook¶

This is the public knowledge base for CDS. Here you'll find information about how we work, the tools and technologies we use, and what life is like as a consultant at CDS.

Who is this for?¶

- Potential employees — get a transparent look at what working at CDS is really like, our values, and how we approach delivery.

- Clients and partners — understand our expertise, our ways of working, and the standards we hold ourselves to.

- CDS consultants — a shared reference for our practices, tools, and approaches.

Explore¶

About CDS¶

Learn about life at CDS and the values that guide us.

Life at CDS Our Values Our Behaviours

Ways of Working¶

Our delivery approach, agile practices, and how we run projects.

Agile Practices · Delivery Approach · Test Approach

Tools & Technologies¶

The tools and technologies we use and recommend as best practice.

Contributing¶

This handbook is maintained by CDS staff. If you work at CDS and want to contribute, see the Contributing Guide.

About CDS

About CDS¶

CDS is a consultancy where people genuinely enjoy the work they do and the people they work with. This section covers who we are, what we believe in, and what it's like to be part of the team.

Life at CDS — What to expect as a CDS consultant, how we learn and grow, and how we work together.

Our Values — The principles that guide how we deliver, collaborate, and make decisions.

Our Behaviours — The behavioural expectations we have of ourselves and our people.

Life at CDS¶

What to expect¶

CDS is a consultancy where people genuinely enjoy the work they do and the people they work with. We believe in creating an environment where consultants can do their best work, grow their skills, and have a real impact on the projects they deliver.

Learning and development¶

We invest in our people. Whether it's conference attendance, certifications, internal knowledge sharing sessions, or time to experiment with new technologies, we create space for continuous learning.

How we work together¶

We're a collaborative team. We share knowledge openly, support each other on projects, and make time for the social side of work too. This handbook is a great example of that — built and maintained by the whole team.

Want to know more?

If you're considering joining CDS, we'd love to hear from you. Reach out to our recruitment team to find out more about current opportunities.

Our Values¶

CDS has five core values. They are not aspirational — they describe how we actually work and how we expect every person at CDS to show up, regardless of role or seniority.

Tenacity¶

We are determined and diligent. It's natural to face obstacles and difficulties every day. We never flinch from them, never look for the path of least resistance. We take responsibility for all our actions and take pride in all our achievements.

Togetherness¶

We're united in our common purpose. A group of talented, like-minded individuals working collectively to transform our clients' organisations for the better.

Integrity¶

We always do what we say we will. When we talk to each other or to our clients, we are honest, open and accurate. Without exception. No excuses.

Curiosity¶

We are never afraid to ask, what if? or why? or how?. We are naturally and unashamedly inquisitive and intrigued to learn or discover a better way.

Challenging¶

We challenge the status quo. We positively and proactively challenge, looking for ways to improve, innovate and inspire.

All five values are expressed through specific behaviours at every level of the business. See Our Behaviours for the full framework.

Our Behaviours¶

At CDS, our values are brought to life through specific behaviours. This framework sets out what those behaviours look like at each seniority level — so that expectations are clear and career progression is transparent.

Seniority levels¶

| Level | Brief description | Scope |

|---|---|---|

| Senior Leadership | Accountability for organisation-wide strategy, outcomes, and direction | Organisation |

| Principal | Highly experienced, V-shaped professionals providing leadership and deep expertise, with significant organisational accountability | Organisation |

| Lead | Experienced practitioners who are adaptable and flexible, with roles which contribute beyond the day-to-day to growing CDS | Department |

| Mid | Broad level covering skilled practitioners across the majority of "doing" roles in the business | Team |

| Associate | Entry level roles across disciplines and departments | Self |

Tenacity¶

We are determined and diligent. We never look for the path of least resistance, and we take pride in all our achievements.

Practitioner Excellence

Demonstrates strong performance with core skill set and shows a desire to expand skills in other areas. Seeks opportunities to learn new techniques and approaches, building a solid foundation of capability.

Proactivity and Drive

Brings enthusiasm to their work and resilience when facing challenges, maintaining a constructive attitude that energises those around them. Approaches obstacles with determination and a problem-solving mindset.

Practitioner Excellence

Demonstrates strong performance with core skill set and actively looks to expand skills in other areas. Champions their core skill set within CDS, sharing expertise with colleagues and contributing to capability discussions.

Proactivity and Drive

Brings proactive energy to driving work forward, approaching obstacles as opportunities and maintaining team morale through difficult periods. Takes initiative to overcome challenges and inspires others through their determination.

Practitioner Excellence

Displays excellence with core skill set and is capable of taking on varied responsibilities. Champions their core skill set within CDS, serving as a go-to expert and mentor for others developing their capabilities.

Proactivity and Drive

Brings infectious optimism combined with determined execution, inspiring teams to push boundaries whilst keeping spirits high in complex situations. Creates momentum through their energy and maintains focus on ambitious goals.

Practitioner Excellence

Displays excellence with core skill set and is strong at taking on varied responsibilities. Champions their core skill set within CDS as a go-to person for others, setting standards for Practitioner Excellence and mentoring future experts.

Proactivity and Drive

Brings visionary drive that motivates the organisation, balancing ambitious goals with sustainable energy and fostering a culture where positivity fuels performance. Creates organisational momentum that inspires people to achieve exceptional results.

Practitioner Excellence

Sets the vision, ambition and direction for CDS including associated technical credibility across all disciplines — operations, commercial, client service, delivery, technology and strategy. Cultivates environments where expertise thrives, ensures standards are forward-looking and robust, and makes strategic decisions grounded in a deep understanding of our people, culture, technology, risk and client outcomes.

Proactivity and Drive

Brings constructive ambition and a calm resolve. Steadies the organisation through uncertainty, energises people with clarity and removes the noise. Maintains focus on long-term value even when faced with short-term pressures. Creates organisational momentum, not dependency.

Togetherness¶

We're united in our common purpose, working collectively to transform our clients' organisations for the better.

People-First Mindset

Willingly helps teammates, shares knowledge openly, and prioritises team success over personal recognition. Contributes positively to team dynamics and actively supports colleagues in achieving shared goals.

Commercial and Business Acumen

Shows awareness of how their work contributes to client value and business outcomes, asking questions to understand the commercial context of decisions. Demonstrates interest in the broader business impact of their activities.

People-First Mindset

Actively collaborates across teams and disciplines, breaking down silos and ensuring collective success over individual achievement. Builds bridges between different groups and fosters a culture of mutual support and shared ownership.

Commercial and Business Acumen

Understands project budgets and client commercial drivers, making decisions that balance delivery quality with cost-effectiveness. Contributes to commercial discussions and identifies opportunities to maximise client value within constraints.

People-First Mindset

Leads in a way that empowers teams and encourages working together, building collaborative cultures and recognising team contributions above individual wins. Creates environments where diverse perspectives are valued and collective success is celebrated.

Commercial and Business Acumen

Exercises strong commercial judgement across projects, identifying opportunities for value creation, managing complex budgets, and contributing to commercial discussions with confidence. Balances client needs with business sustainability and growth.

People-First Mindset

Thinks at an organisational level to eliminate hero culture, creating systems and environments where diverse teams achieve exceptional results together. Shapes how CDS operates to ensure collaborative success becomes embedded in everything we do.

Commercial and Business Acumen

Thinks strategically about commercial matters that shape client relationships and business direction, influencing major commercial decisions and identifying new market opportunities. Positions CDS for sustainable growth whilst maintaining client value focus.

People-First Mindset

Unifies the organisation. Dissolves internal barriers, removes friction, role-models collaboration across disciplines, and creates systems where individuals and teams succeed collectively rather than through isolated heroics. Understands that their behaviour, actions and decisions shape culture — and acts accordingly.

Commercial and Business Acumen

Thinks and acts like custodians of the business. Evaluates decisions through the lens of long-term sustainability, client value, risk and return. Ensures CDS remains financially resilient, strategically positioned, and commercially well-judged.

Integrity¶

We always do what we say we will. We are honest, open and accurate — without exception.

Integrity and Honesty

Acts with honesty in all interactions, speaking up when something doesn't feel right and admitting when they don't know something. Builds trust through transparent communication and ethical conduct in all situations.

Open Communication

Communicates clearly and proactively with teammates and clients, providing timely updates on progress and challenges. Receives feedback with openness and willingness to learn, asking clarifying questions to understand how to improve.

Integrity and Honesty

Acts with integrity in decision-making and client interactions, having difficult conversations when needed and building trust through transparency. Demonstrates ethical leadership in challenging situations and role-models honest communication.

Open Communication

Maintains transparent, frequent communication with clients and colleagues, proactively managing expectations and addressing issues before they escalate. Provides timely, specific feedback to colleagues that focuses on actions and outcomes, helping others improve whilst also seeking feedback on their own performance.

Integrity and Honesty

Maintains strong integrity in complex situations, role-modelling honest communication with colleagues and clients even under pressure, and creating psychologically safe environments. Sets the tone for teams and ensures standards are upheld consistently.

Open Communication

Sets the standard for clear, honest client and team communication, ensuring stakeholders are aligned and informed throughout engagements. Delivers challenging feedback with care and clarity, creating dialogue that drives improvement and regularly coaching others on how to give and receive feedback effectively.

Integrity and Honesty

Provides ethical leadership that sets the standard for the organisation, ensuring integrity is embedded in everything CDS does. Creates the conditions for ethical decision-making and transparent conduct across all levels of the business.

Open Communication

Establishes communication excellence as an organisational standard, ensuring transparency in all client and internal interactions. Models exceptional feedback practices across the organisation, fostering a culture where constructive challenge is expected and valued.

Integrity and Honesty

Upholds uncompromising ethical standards. Acts with independence of judgement, even when decisions are uncomfortable, complex or scrutinised. Creates an environment where truth can be spoken, concerns can be raised without fear, and transparency is the default, not an aspiration.

Open Communication

Creates organisational norms for transparent, courageous communication at all levels — with clients, across teams, and throughout leadership. Sets the behavioural standards and psychological safety that enables challenge, and ensures both communication and feedback flow vertically and horizontally as habitual practice.

Curiosity¶

We are never afraid to ask, what if? or why? or how?. We are inquisitive and intrigued to learn or discover a better way.

Adaptability

Remains flexible when priorities shift or approaches change, staying open to feedback and new ways of working. Embraces change as an opportunity to learn and demonstrates willingness to step outside their comfort zone.

Continuous Improvement

Displays curiosity about better ways of working, actively seeking feedback and learning from experiences to develop their skills. Shows initiative in identifying opportunities for personal development and skill enhancement.

Adaptability

Responds with agility to changing client needs and project dynamics, helping teams navigate uncertainty whilst maintaining momentum. Shows strong desire to take on responsibilities outside their comfort zone, embracing new challenges.

Continuous Improvement

Commits to improving processes and practices, experimenting with new approaches and sharing learnings with others. Identifies inefficiencies and takes action to address them, contributing to the evolution of team practices.

Adaptability

Shows resilience in complex, ambiguous situations, pivoting strategies when needed and helping others embrace change constructively. Comfortable operating outside their comfort zone and demonstrates versatility across varied responsibilities.

Continuous Improvement

Leads improvement initiatives, identifying systemic issues and implementing changes that benefit multiple teams or projects. Champions innovation and creates frameworks for sustainable improvement across the organisation.

Adaptability

Excels in navigating organisational change and market shifts, setting the tone for how CDS responds to uncertainty and evolves its approaches. Demonstrates strategic flexibility and is comfortable making decisions with imperfect information.

Continuous Improvement

Holds strategic vision for organisational improvement, championing innovation and establishing practices that elevate CDS's delivery excellence. Creates frameworks and systems that enable continuous evolution of capabilities and approaches.

Adaptability

Anticipates change rather than reacts to it. Adjusts strategies thoughtfully and brings people with them through uncertainty. Helps the organisation stay agile and match fit rather than defensive or reactive.

Continuous Improvement

Changes how the organisation works — not just how their part of it works. Identifies patterns, root causes and structural constraints, and addresses them. Stewards innovation by creating a safe space for experimentation, learning and organisational evolution. Improvement becomes cultural, not sporadic.

Challenging¶

We challenge the status quo. We positively and proactively look for ways to improve, innovate and inspire.

Leadership

Takes ownership of their own tasks and commitments, looking for opportunities to support others and contribute beyond their immediate responsibilities. Demonstrates accountability and seeks ways to add value to the team.

Client Obsessed

Shows awareness of customer needs and how their work impacts the end user or customer experience. Asks questions to understand client objectives and considers the customer perspective in their daily work.

Leadership

Leads initiatives and individuals, driving work forward and inspiring others through their actions and approach. Takes accountability for outcomes and empowers team members to take ownership of their contributions.

Client Obsessed

Maintains consistent focus on outcomes, making decisions that prioritise client success and advocating for customer needs. Builds strong working relationships with clients and anticipates their needs proactively. All decisions made have our clients at the centre.

Leadership

Leads teams and complex initiatives, taking accountability, creating environments where people thrive and delivering outcomes through others. Develops leadership capability in team members and shapes how work is approached and executed.

Client Obsessed

Possesses deep understanding of client and customer challenges, shaping approaches around value and building lasting client partnerships. Acts as a trusted adviser who anticipates client needs and delivers strategic insight. All decisions made have our clients at the centre.

Leadership

Leads across the organisation, taking strong accountability, influencing culture and direction, mentoring leaders, and shaping how CDS operates. Develops leadership capability at scale and ensures the organisation has the leadership strength needed for future success.

Client Obsessed

Maintains strategic focus that influences CDS's direction, ensuring client success remains central to how we grow and evolve. Shapes organisational strategy around deep understanding of client needs and market dynamics. All decisions made have our clients at the centre.

Leadership

Shapes direction, culture and consequence. Challenges organisational assumptions, invites challenge to their own thinking, and treats curiosity, challenge and opposing views as a sign of health, not disruption. Holds themselves and others to account for the long-term success of the company — even when it requires difficult decisions.

Client Obsessed

Maintains a strategic understanding of the markets and communities we serve, acting as trusted advisers rather than passive suppliers. Engages clients with courage and empathy — challenging assumptions, offering alternative perspectives, and guiding decisions that lead to better outcomes. Recognises that in the mission-critical environments we support, failure is not merely inconvenient but consequential.

Ways of Working

Ways of Working¶

How we deliver projects, run teams, and maintain quality. This section covers the practices and approaches that define how CDS operates on the ground.

Agile Practices — How we apply agile methodologies in practice, not just in theory.

Delivery Approach — Our end-to-end approach to delivering projects for clients.

Test Approach — Our automation-first testing strategy, tools, and quality practices.

Agentic Engineering — How we use coding agents effectively and responsibly.

Agile Practices¶

At CDS, we follow agile principles pragmatically. We don't enforce a rigid framework — we adapt our approach to fit the context of each engagement while maintaining consistency in the practices that matter.

Our approach¶

We typically work in two-week sprints, though we adjust cadence based on client needs and project context. The important thing is a regular rhythm of planning, delivery, and reflection.

Ceremonies we value¶

Sprint planning¶

We plan collaboratively with the whole team. Everyone has input into what we commit to and how we break down the work.

Daily standups¶

Short, focused check-ins to surface blockers and keep the team aligned. We keep these to 15 minutes or less.

Retrospectives¶

Every sprint ends with a retro. We use a variety of formats to keep them fresh, but the goal is always the same: what can we do better next time?

Show and tell¶

We demo working software to stakeholders regularly. It builds trust, surfaces feedback early, and keeps everyone aligned on progress.

Estimation¶

We use story points for relative sizing, not as a measure of time. We find this encourages better conversations about complexity and risk.

Flexibility is key

These are our defaults, not rules. We adapt to fit the client's existing processes where it makes sense, and we're always open to trying new approaches.

Delivery Approach¶

Our delivery approach is designed to be lightweight, effective, and adaptable. We focus on delivering value early and often, with strong communication throughout.

Engagement lifecycle¶

Discovery¶

Before we write any code, we invest time in understanding the problem. This typically involves stakeholder interviews, technical assessment, and defining clear success criteria.

Delivery¶

We deliver iteratively, with working software demonstrated regularly. We prioritise ruthlessly and focus on the highest-value items first.

Transition¶

We don't just build and leave. We ensure proper knowledge transfer, documentation, and support planning so that our clients can maintain and extend what we've built.

Documentation standards¶

We believe in documentation that is useful, not documentation for its own sake. At a minimum, every project should have:

- A clear README with setup instructions

- Architecture decision records (ADRs) for significant technical decisions

- Runbooks for any operational processes

- Up-to-date API documentation where applicable

Quality¶

Quality is built in, not bolted on. We practice code review, automated testing, and continuous integration as standard. We define a clear "definition of done" at the start of each engagement and hold ourselves to it.

Architecture and Technical Decision-Making¶

Architecture at a consultancy is fundamentally different from architecture at a product company. We rarely start with a blank canvas. More often we arrive in an organisation with an existing technology landscape, established teams, inherited constraints, and decisions made years ago by people who have since moved on. Our job is to understand that context, work within it where it makes sense, challenge it where it does not, and leave behind systems that the client's own teams can confidently maintain, extend, and evolve long after our engagement ends.

That responsibility shapes how we think about architecture. We favour approaches that are easy to reason about over those that are clever. We document our decisions so that the people who inherit the system understand not just what we built but why. And we treat architecture as something that happens continuously throughout delivery, not as a phase that produces a document and then falls silent.

Our architecture principles¶

These principles guide our technical decision-making across engagements. They are not rigid rules. Different contexts demand different trade-offs, and a good architect knows when to deviate from a principle and can explain why. But these represent our defaults, the positions we start from unless there is a compelling reason to do otherwise.

Design for the team that comes next¶

Every system we build will eventually be owned by someone who was not in the room when the decisions were made. This is especially true in consultancy, where the handover is a known, planned event rather than an unlikely future. We design with that team in mind. We choose technologies the client can recruit for and support. We write code that is readable rather than merely concise. We favour established patterns over novel ones where both would solve the problem equally well. The cleverest solution is rarely the best one if the people maintaining it cannot understand it.

Prefer simplicity¶

Simple systems are easier to build, test, deploy, operate, debug, and change. Complexity should be introduced only when simpler alternatives have been genuinely evaluated and found insufficient. A modular monolith that a small team can reason about is often a better starting point than a distributed microservices architecture that demands platform engineering capability the client does not yet have. We earn the right to add complexity by first demonstrating that the simpler approach will not meet the need.

Make reversible decisions quickly, irreversible ones carefully¶

Not all decisions carry equal weight. Choosing a logging library is easily reversed. Choosing a cloud provider or a primary database engine is not. We calibrate the rigour of our decision-making to the cost of getting it wrong. Low-stakes, easily reversible decisions should be made quickly to maintain delivery momentum. High-stakes, difficult-to-reverse decisions deserve proper analysis, wider consultation, and formal documentation through an Architecture Decision Record.

Secure by design¶

Security is a design concern, not a compliance checkbox. We consider threat models during discovery, build security controls into the architecture from the outset, and treat security testing as a continuous activity throughout delivery rather than a gate at the end. For UK public sector engagements, we align with the NCSC Cloud Security Principles and design systems that support the shared responsibility model for cloud services. Secrets are managed through proper vaulting, access is controlled through least-privilege principles, and data classification informs how and where information is stored and transmitted.

Cloud-native by default, pragmatic by necessity¶

We design for the cloud because that is where most modern systems run and where the operational advantages are greatest. We use managed services where they reduce operational burden without creating unacceptable vendor lock-in. We containerise workloads for portability and consistency across environments. But we do not adopt cloud-native patterns dogmatically. If a client's constraints mean a hybrid approach is the right answer, or if a particular managed service creates more lock-in than it saves in operational effort, we adjust. The architecture should serve the client's situation, not an ideology.

Build for observability from the start¶

A system that cannot be observed in production cannot be operated reliably. We design logging, metrics, tracing, and health checks into the architecture from the beginning rather than retrofitting them when the first incident reveals we are flying blind. Structured logging, correlation identifiers across service boundaries, and meaningful health endpoints are standard expectations, not optional extras.

Favour proven technology for core systems¶

We are not early adopters for the sake of it. Core systems that handle critical business logic, data persistence, and user-facing transactions should be built on technology with a strong track record, active community support, and a deep talent pool. Innovation and experimentation belong at the edges, in prototypes, in tooling, in areas where the cost of failure is low and the potential upside justifies the risk. This does not mean we avoid modern technology. It means we distinguish between technology that is modern and technology that is immature, and we are honest about which is which.

Architecture in the delivery lifecycle¶

Architecture is not a phase. It is a thread that runs through every stage of an engagement, from the earliest conversations about the problem through to handover and beyond.

During discovery¶

The most important architecture work often happens before a single line of code is written. During discovery we focus on understanding the existing landscape: what systems are already in place, how they integrate, where the pain points are, and what constraints are genuinely fixed versus merely assumed. We map out the non-functional requirements, the performance expectations, regulatory requirements, security considerations, and integration points, because these shape the architecture far more than functional requirements do.

Discovery is also where we identify the highest-risk architectural decisions and begin to work through them. If the engagement depends on a particular technology choice or integration approach working, we would rather discover that risk early through a spike or proof of concept than encounter it halfway through delivery when the cost of changing course is much higher.

During delivery¶

Architecture evolves as the system is built and understanding deepens. We do not attempt to design everything upfront because we have learned that the most useful architectural insights emerge through the act of building. Instead, we maintain a lightweight set of architecture documentation that evolves with the system and we use Architecture Decision Records to capture significant choices as they arise.

The architect, or whoever is fulfilling that role on a given engagement, is embedded in the delivery team rather than operating at a distance. They participate in sprint ceremonies, review pull requests, pair with developers on complex problems, and keep the broader architectural vision aligned with what is actually being built. Architecture that exists only in a slide deck and has diverged from the code is worse than no architecture documentation at all, because it actively misleads.

At handover¶

Handover is where the investment in documentation and decision-recording pays off most visibly. A well-maintained set of ADRs, a clear C4 context diagram, and an up-to-date record of integration points and operational concerns give the receiving team a running start. We plan the handover from the beginning of the engagement, not as an afterthought in the final sprint. If the client's team will own the system, we involve them in architectural discussions throughout delivery so that there is no gap in understanding when we step away.

Architecture Decision Records¶

Architecture Decision Records are one of the most valuable tools in a consultancy's toolkit. They capture the context, options considered, and rationale behind significant technical decisions in a format that is lightweight enough to actually be maintained and detailed enough to be useful months or years later when someone asks "why did we do it this way?"

Why we use them¶

In a product company, the people who made a decision are often still around when someone questions it. In consultancy, they frequently are not. ADRs bridge that gap. They give the client's team a clear record of what was decided, what alternatives were considered, and why the chosen approach was preferred. They also provide an audit trail that is increasingly valued in public sector engagements, where the GOV.UK Architectural Decision Record Framework encourages consistent documentation of technology decisions across government.

Beyond handover, ADRs improve the quality of decision-making in the moment. The discipline of writing down the context, constraints, and trade-offs forces a rigour that purely verbal discussions often lack. Decisions that seemed obvious in a meeting room sometimes look quite different when the reasoning has to be articulated clearly enough for someone else to follow.

When to write one¶

Not every decision needs an ADR. We write them for decisions that are significant, meaning they are difficult to reverse, affect the system's structure in a material way, involve meaningful trade-offs between competing concerns, or are likely to be questioned in future. Typical triggers include choosing a primary technology or framework, defining the integration approach with an external system, selecting an authentication or authorisation strategy, deciding between architectural patterns such as synchronous versus asynchronous communication, and establishing the deployment and hosting model.

If a decision can be easily changed later without significant rework, it probably does not need an ADR. If the team is likely to forget why a decision was made within six months, it probably does.

Our template¶

We keep the format simple. An ADR that is too burdensome to write will not get written. Our standard template covers the following sections.

Title and date. A short, descriptive title and the date the decision was made. We number ADRs sequentially within each project so they form a chronological record.

Status. One of proposed, accepted, superseded, or deprecated. This makes it clear whether a decision is still current.

Context. The situation that prompted the decision. What problem are we solving? What constraints are we working within? What are the relevant non-functional requirements? This section is arguably the most important because it captures the information that is most likely to be lost over time.

Options considered. A brief description of each viable option, with its trade-offs. This section demonstrates that the decision was deliberate rather than default, and it helps future readers understand what was ruled out and why.

Decision. What we decided and a clear statement of the rationale. This should be specific enough that someone who was not involved can understand the reasoning.

Consequences. What follows from this decision, both positive and negative. What becomes easier? What becomes harder? What new constraints does this create? Honest articulation of the downsides builds trust in the record's integrity.

This structure aligns with the widely adopted format originally proposed by Michael Nygard and is compatible with the GOV.UK ADR Framework used across the UK public sector.

Where they live¶

ADRs live in the project wiki in Azure DevOps rather than buried in the code repository. The wiki is the natural home because it is immediately visible to everyone on the project, including client stakeholders who may not routinely browse the repo, and it does not require a pull request to read or update. We typically create an "Architecture Decisions" section in the project wiki with each ADR as a separate page, numbered sequentially. Since Azure DevOps wikis are backed by a Git repository under the hood, the version history and diff capability are still there when needed. ADRs are written in Markdown, which renders neatly in the wiki and can be exported or migrated if the project moves to a different platform.

Documenting architecture¶

Good architecture documentation is the minimum set of information someone needs to understand, operate, and change a system. We aim for documentation that is lightweight enough to be maintained as the system evolves and specific enough to be useful when it matters.

The C4 model¶

We use the C4 model as our default approach to visualising software architecture. Created by Simon Brown, C4 provides four levels of abstraction, from the broadest system context down to individual code structures, letting us communicate the architecture at the right level of detail for the audience.

Context diagrams show the system in its environment: who uses it, what other systems it interacts with, and where the boundaries are. These are useful for stakeholders, governance boards, and anyone who needs to understand the system without getting into technical detail. Every project should have one.

Container diagrams show the major technical building blocks: web applications, APIs, databases, message queues, and the like. They convey the high-level technology choices and how the components communicate. This is the level that most developers and architects find most useful day to day.

Component diagrams show the internal structure of a container. These are useful during detailed design but can quickly become stale if maintained manually. We produce them when they add genuine value and accept that they may represent a point-in-time view rather than a living document.

Code-level diagrams are rarely worth producing manually. If the codebase is well structured, the code itself serves as this level of documentation. IDE tools and automated generation can supplement where needed.

Diagrams as code¶

We prefer diagramming tools that treat diagrams as code rather than as binary files locked inside a proprietary application. Our default choice is Mermaid, which renders natively in Azure DevOps wikis, MkDocs, and most other Markdown platforms without needing any additional tooling or build steps. Mermaid covers the diagram types we reach for most often in practice: flowcharts, sequence diagrams, C4 context and container views, and entity relationship diagrams among others. It can also be rendered to PNG, SVG, and PDF when diagrams need to appear in documents or presentations.

The real advantage of writing diagrams in Mermaid rather than drawing them in Visio or draw.io is that changes are tracked through version control in the same way as any other text file. Reviewers can see exactly what changed in a diff without having to open two versions of a diagram side by side and squint at the differences. There is no fiddling with box positions and arrow routing when the only thing that actually changed was an added dependency between two services. The tooling gets out of the way and the content stays front and centre.

Working with public sector clients¶

Many of our clients operate within the UK public sector, where architecture decisions are guided by established frameworks and standards. We are familiar with these frameworks and design our approach to align with them, which reduces friction for our clients and demonstrates that we understand the environment they operate in.

The Technology Code of Practice¶

The GOV.UK Technology Code of Practice sets out thirteen criteria that guide how government organisations design, build, and buy technology. Several of these have direct implications for architecture: designing for interoperability, using open standards, avoiding vendor lock-in, making source code open where appropriate, and ensuring technology choices are sustainable. We ensure our architectural recommendations are compatible with these principles, and where a client is subject to Cabinet Office spend controls, we structure our documentation to support the assurance process.

NCSC Cloud Security Principles¶

The National Cyber Security Centre's fourteen Cloud Security Principles form the baseline for evaluating cloud service security in the UK public sector. When we design cloud architectures for government clients, we assess our approach against these principles, covering data protection in transit and at rest, supply chain security, operational security, and personnel security among others. For clients procuring services through G-Cloud, alignment with NCSC principles is expected rather than optional.

The GOV.UK Service Standard¶

Where the system we are building constitutes a public-facing service, it will typically need to meet the GOV.UK Service Standard. Several points in the standard have architectural implications, including designing for accessibility, building a multidisciplinary team, iterating based on user research, and choosing the right tools and technology. We factor these requirements into our architecture from the outset rather than treating them as constraints to be addressed retroactively.

Common trade-offs we navigate¶

Architecture is the art of managing trade-offs. Every decision involves giving something up to gain something else, and the skill lies in making those trade-offs deliberately and transparently. These are some of the trade-offs we encounter most frequently.

Build versus buy. Building bespoke software gives maximum control and fit but carries ongoing maintenance cost. Buying or adopting a managed service reduces maintenance burden but introduces dependency and may not fit the exact need. We evaluate this trade-off on a case-by-case basis, leaning towards buying where the capability is not a competitive differentiator for the client and building where the specific requirements are genuinely unique.

Consistency versus autonomy. In larger systems with multiple teams, there is a tension between enforcing consistency of technology choices and allowing teams the autonomy to pick the best tool for their context. Too much consistency and teams are forced into suboptimal choices. Too much autonomy and the organisation ends up with an unmanageable zoo of technologies that nobody can recruit for or support. We generally advocate for a "paved road" approach: a supported, well-documented default stack that teams should use unless they have a compelling reason not to, with a lightweight governance process for exceptions.

Upfront design versus emergent architecture. Designing everything before building anything risks producing an architecture that does not survive contact with reality. Designing nothing risks ending up with accidental architecture shaped by expedient short-term decisions. We aim for the middle ground: enough upfront thinking to establish the major structural decisions and reduce risk, combined with the flexibility to adapt as understanding deepens through delivery. ADRs are the mechanism that makes this work, capturing decisions as they emerge rather than requiring them all to be made at the start.

Optimising for now versus optimising for later. Systems can be over-engineered for a future that never arrives or under-engineered for a present that quickly becomes unsustainable. We try to build for current known requirements with a clear understanding of likely future directions. This means designing interfaces and boundaries that will accommodate growth without building the growth capability itself until it is needed. The principle of "make it easy to change" is more durable than "predict the change".

Architecture is a conversation

The most effective architecture work we do happens through ongoing dialogue with delivery teams, stakeholders, and the client's own technical leadership. A brilliant architecture that nobody understands or agrees with is worth less than a good-enough architecture that everyone can work with.

Test Approach¶

Testing is fundamental to how we deliver at CDS. We take an automation-first approach, building quality in from the start rather than inspecting it in at the end. Our testing practices are modern, pragmatic, and shaped by real delivery experience.

The purpose of testing at CDS is to ensure faster feedback loops, earlier defect detection, and seamless support for CI/CD. Everything we do in testing is oriented around helping agile delivery squads build clear, maintainable, and scalable test automation.

Core principles¶

Quality isn't a phase. It's how we work.

These are the principles that guide every testing and quality decision we make — on every engagement, for every client.

We believe quality is built in from the start, owned by the whole team, and never treated as a box to tick before release.

01 — Automation First¶

We automate where it adds real value — so your team can focus on the testing that requires human judgement.

Automation is our default, not an afterthought. We build automated tests alongside the product code — not after the fact — so regressions are caught early and confidence in the codebase is maintained continuously.

That said, automation isn't unconditional. We weigh the cost of building and maintaining a test against the value it delivers. Where functional or exploratory testing adds more value, that's the approach we take. The goal is the right coverage, not the highest number of automated tests.

02 — Shift Left¶

We embed testing in design and requirements — because defects caught early cost a fraction of those caught late.

Testers don't wait for finished code. They're involved from the moment requirements are being shaped — challenging assumptions, identifying edge cases, and making sure the team is building the right thing before a line of code is written.

Developers and testers work together throughout, not in sequence. Unit, API, contract, and integration tests form the foundation of our automation — following the test pyramid model, where fast, targeted tests at the base support the broader, higher-level checks above.

03 — Fail Fast¶

Tests are woven into the delivery pipeline. Issues surface immediately — not at the end of a sprint.

We integrate tests into CI/CD pipelines so that every change is validated automatically. A failing build stops progress until the issue is resolved. A passing pipeline isn't a formality — it's the mechanism by which we always maintain confidence in the codebase.

Non-functional failures — whether in performance, accessibility, or security — are treated with the same seriousness as functional regressions. Exceptions exist, but they are deliberate and always accompanied by a clear remediation plan.

04 — Keep It Simple, Reuse Often¶

Test code is real code. We hold it to the same standards — and build shared foundations that every team can benefit from.

We don't treat test code as second-class. It should be consistent, readable, and maintainable — subject to the same standards and processes as the production code it supports.

We invest in shared automation libraries across engagements — utilities, reporting wrappers, test data generators — so that teams spend their time on meaningful test design, not rebuilding common infrastructure from scratch on every project.

05 — The Right Tool for the Situation¶

We're tool-agnostic by design. We choose what fits — not what's most familiar.

Different clients, tech stacks, and project constraints call for different tools. We maintain preferred defaults and a broad toolkit of frameworks we're experienced with — but these are starting points, not constraints.

The best tool for the job always wins over the most familiar one. We're comfortable working within existing ecosystems, introducing new approaches where they add value, and making a clear case when a change in tooling is the right call.

06 — Coverage as a Risk Indicator, not a Gate¶

We use coverage data to understand risk — not to hit a number.

Coverage statistics tell us where risk lives — untested code paths, overlooked edge cases, modules that have grown without corresponding test investment. That's genuinely useful information.

What we don't do is enforce arbitrary thresholds. Hard gates drive the wrong behaviour: teams write low-value tests to satisfy a metric rather than meaningful tests that catch real defects. We'd rather have 70% coverage of well-written, targeted tests than 95% coverage of tests that assert nothing useful.

Underlying all of this is a commitment to testability. Coverage data is only meaningful if the product is built to be testable in the first place. We advocate for testability from the earliest design decisions — ensuring code is structured so that behaviour can be isolated, validated, and understood. A product that is hard to test is a product that carries hidden risk. We make testability a first-class concern, not an afterthought.

07 — Non-Functional Quality is Built In, Not Bolted On¶

Accessibility, performance, security, and visual integrity are part of quality from day one — not audits before go-live.

These aren't separate workstreams or last-minute checklists. They are first-class quality concerns, tested continuously and owned by the whole team:

- Accessibility is validated as part of every regression cycle, not deferred to a pre-launch review.

- Performance is monitored against agreed baselines throughout delivery. Degradation is caught early, not discovered in production.

- Security is raised during design and requirements — vulnerabilities are far cheaper to address before build than after.

- Visual regression is automated where stable baselines can be maintained, so unintended UI changes are caught as part of normal test execution.

Non-functional quality is continuous, not a last-minute checklist.

08 — Quality is Everyone's Job¶

Testers set the standard and provide the expertise. The whole team is responsible for meeting it.

Quality doesn't belong to a single function. Developers write tests. Business analysts write testable acceptance criteria. Architects consider testability in design. Everyone raises risks early and holds the team to best practices.

Our testers provide expertise, standards, and guidance — but they are not a gate at the end of the line. Quality is built in from the start. It is never bolted on at the end.

Scope of testing¶

Automation¶

The scope of our automation includes unit, component, API, integration, regression, smoke, security, accessibility, and performance tests, as well as automated acceptance tests tied to user stories. We prioritise business-critical, repetitive, and high-risk scenarios for automation first.

Test validation¶

Test validation focuses on where human judgement adds the most value: exploratory testing, UX/UI validation, accessibility auditing, and ad-hoc testing. High-value one-off scenarios that don't justify the cost of automation are also handled using appropriate testing techniques.

Types of testing¶

We apply multiple layers of testing to build confidence at every level of the system, following the test pyramid model.

Unit testing forms the foundation. Fast, isolated, and cheap to run, unit tests verify that individual components behave as expected. We use the appropriate framework for the language — xUnit and NUnit for .NET, Jest for JavaScript and TypeScript, pytest for Python, and equivalents elsewhere. Unit tests run on every commit and are expected to pass before code is merged.

API and integration testing verifies that components work together correctly. This is where we catch issues with data flows, service boundaries, and external dependencies. We use tools like Postman and Newman extensively for API-level testing, validating contracts, response structures, and error handling across service boundaries.

End-to-end testing validates complete user journeys through the system. We use Playwright as our primary tool for browser-based E2E testing — it's fast, reliable, and supports multiple browsers out of the box. E2E tests are powerful but expensive to maintain, so we focus them on critical user paths rather than trying to cover every permutation.

Performance testing ensures the system can handle expected (and unexpected) traffic. We baseline early in the sprint cycle, run load and stress tests post-merge on staging environments, and set performance SLAs for response times, throughput, and latency. Performance testing is built into the delivery lifecycle rather than left as a last-minute activity.

Security testing is integrated throughout the pipeline. We run static analysis (SAST) on commit and pull request, and dynamic testing (DAST) as part of nightly or full pipeline runs. Teams are educated on common vulnerabilities, with the OWASP Top 10 as a baseline.

Accessibility testing combines automated checks integrated into the UI pipeline with manual audits on high-traffic flows before release. Accessibility is not an afterthought — it's part of our standard testing scope.

Testing in the delivery lifecycle¶

Testing isn't a phase — it's woven into every stage of our agile delivery process.

Backlog grooming includes test scenarios and a testability review. How will we test this? What does "done" look like? What are the riskiest areas that need the most coverage? These questions shape the technical approach from the outset.

Sprint planning defines test automation tasks per story on the project board, split into test design and automation tasks so they're visible and estimated alongside development work.

During the sprint, we automate during development, not after. Developers write tests alongside their code. Tests run locally before pushing, and the CI pipeline validates them on every commit and pull request. Code reviews include reviewing the quality and coverage of tests, not just the production code. Teams build and use shared components where applicable.

Definition of Done includes unit, API, UI automation, and non-functional coverage. A story isn't done until it's tested.

Sprint review includes a demo of test coverage, giving the team and stakeholders visibility of quality alongside functionality.

Exploratory testing complements the automated suite throughout. Skilled testers think creatively about how the system might fail, test edge cases that automated tests wouldn't cover, and bring a user's perspective that pure automation misses. Automation handles the repetitive checks; people handle the thinking.

Continuous testing in the pipeline¶

Our CI/CD pipelines enforce quality automatically. A typical pipeline runs tests at multiple trigger points:

| Trigger | What runs |

|---|---|

| Code commit | Unit tests |

| Pull request | API and UI tests |

| Nightly / scheduled | Full regression, non-functional tests (performance, security, accessibility) |

| Deployment | Smoke tests to verify the deployment is healthy |

If tests fail, the pipeline fails. This is the automation-first mindset in practice — trust the pipeline and keep it green.

Governance and standardisation¶

We maintain consistency across projects without stifling flexibility.

Centralised frameworks and libraries enable faster onboarding, easier knowledge transfer, and reduced maintenance. Common utilities, logging wrappers, reporting tools, and test data generators are shared across teams and open to contribution.

Coding standards for test code are enforced consistently. Test code is production code — it should be clean, readable, and maintainable.

Documentation standards include a test strategy per project (aligned to our organisation-wide strategy), versioned test cases with traceability to requirements, and clear READMEs. Tests themselves are treated as live documentation — they should be readable enough that a new team member can understand the system's behaviour from the test suite.

Dashboards provide visibility of automation job status, test coverage trends, and quality metrics across projects.

Metrics and reporting¶

We track and report on metrics that drive meaningful improvement:

- Test coverage — as a risk indicator, not a target (see our principles above)

- Automation pass/fail rates — to monitor test suite health and catch flaky tests

- Defects caught pre vs post production — the clearest measure of whether shift-left is working

- Non-functional benchmarks — performance baselines, response time trends, throughput

- Security vulnerability trends — tracking resolution rates and patterns over time

Tools we use¶

We select tools based on the client's tech stack, team capability, and project needs. Tools we have deep experience with include:

| Purpose | Tools |

|---|---|

| Unit testing | xUnit, NUnit, Jest, pytest, and language-appropriate equivalents |

| API testing | Postman, Newman |

| E2E / browser testing | Playwright |

| Performance testing | k6, JMeter, Locust |

| Security testing | SAST and DAST tooling integrated into pipelines |

| Accessibility testing | Automated checks in UI pipelines, manual audits |

| CI/CD integration | Azure DevOps Pipelines, GitHub Actions |

This isn't an exhaustive list — if a project needs something different, we'll adopt it. The principle is always to use the best tool for the job, not the most familiar one.

AI-assisted testing¶

We use AI tools to enhance our testing and development workflows. Tools like Claude Code and GitHub Copilot help us with both technical tasks — scaffolding test frameworks, generating test cases, writing automation code — and non-technical tasks like documentation and test planning.

AI is an accelerator, not a replacement. Everything AI-assisted goes through human review. We use these tools safely and responsibly, with a human always in the loop to validate output, catch errors, and apply the engineering judgement that AI can't.

We continue to explore how AI can add value in areas like visual testing, coverage analysis, and identifying patterns in test failures, adopting new capabilities where they prove genuinely useful.

Automation-first doesn't mean automation-only

Our strongest test strategies combine a robust automated suite with targeted exploratory testing. Automation catches the known risks; skilled testers find the unknown ones.

Agentic Engineering¶

Coding agents — tools like Claude Code that can read, write, and execute code autonomously — are changing how software gets built. At CDS, we use these tools daily. This page captures the practices and disciplines we've adopted to get the most from them without sacrificing quality.

The core insight is straightforward: writing code is cheap now, but delivering good code is not. Agents can produce hundreds of lines in minutes, but the responsibility for correctness, clarity, and maintainability still sits with us. The patterns below help us bridge that gap.

Good code still has a cost¶

Agents dramatically reduce the cost of typing code into a computer. They do not reduce the cost of ensuring that code is worth keeping. Good code:

- Works correctly and handles error cases gracefully

- Solves the right problem, not just a problem

- Is protected by tests that catch regressions

- Is simple and minimal — does only what's needed

- Has documentation that reflects the current state of the system

- Meets non-functional requirements: security, accessibility, performance, maintainability

Every line an agent writes still needs a human to confirm it meets these standards. The speed of generation makes this discipline more important, not less.

Don't inflict unreviewed code on colleagues¶

This is the single most important rule for teams using coding agents.

Do not submit pull requests with code you haven't reviewed yourself.

If you open a PR with hundreds of lines that an agent produced and you haven't verified it works, you're delegating the actual work to your reviewers. They could have prompted the agent themselves — what value are you providing?

A good agentic engineering pull request:

- Works, and you're confident it works. You've run it, tested it, and verified the behaviour yourself.

- Is small enough to review efficiently. Several small PRs are better than one large one. Agents make splitting work into separate commits straightforward.

- Includes context. What's the goal? Link to relevant work items or specifications. Explain implementation choices that aren't obvious.

- Has a description you've actually read. Agents write convincing-looking PR descriptions. Review these too — it's disrespectful to expect someone to read text you haven't validated yourself.

Include evidence that you've done the work: notes on how you tested it, comments on specific decisions, screenshots, or a short video of the feature working. This goes a long way to showing reviewers that their time won't be wasted.

Use agents to pay down technical debt¶

A common category of technical debt is changes that are simple but time-consuming:

- An API design that doesn't cover an important case, requiring changes in dozens of places

- A naming decision made early on that's now confusing but too tedious to fix everywhere

- Duplicate functionality that's grown organically and needs consolidating

- A file that's grown to several thousand lines and needs splitting into modules

These refactoring tasks are an ideal application of coding agents. Fire up an agent, describe the change, and let it work through the codebase. Evaluate the result in a PR. If it's good, land it. If it's close, prompt further. If it's poor, discard it.

The cost of these improvements has dropped so far that we can afford a much lower tolerance for code smells and inconsistencies than we could before.

Exploratory prototyping¶

Any software development task comes with multiple approaches. Some of the most costly technical debt comes from making poor choices at the planning stage — missing an obvious solution or picking a technology that turns out to be wrong.

Coding agents make exploratory prototyping nearly free. Need to know if Redis is the right choice for an activity feed under load? Have an agent wire up a simulation and run a load test. Want to compare two approaches to a data pipeline? Run both in parallel and evaluate the results.

This is especially valuable during discovery and architecture phases. Instead of debating options in a meeting, prove them out with working prototypes. Since prototypes are cheap, run multiple experiments at once and pick the approach that best fits the problem.

Build and share institutional knowledge¶

A significant part of engineering skill is knowing what's possible and roughly how to do it. Can a web page run OCR in JavaScript alone? Can we process a 100GB file without loading it into memory? The more answers you have to questions like these, the more opportunities you'll spot.

With agents, you only need to figure out a useful technique once. Document it with a working code example — in a repository, a wiki page, or a shared library — and any agent can consult that example to solve similar problems in the future.

At CDS, this is particularly valuable because our consultants move between engagements. Patterns and solutions that worked on one project become reusable assets for the next. Practical ways to do this:

- CLAUDE.md files in every repository, capturing project conventions and context

- Shared code libraries with proven patterns and utilities

- Architecture decision records that explain not just what was decided, but what was tried and discarded

- Working examples of techniques that might be useful on future engagements

The key idea is that agents are excellent at recombining existing working solutions. Two documented patterns that individually solve small problems can be combined by an agent into a solution for a much larger one.

The compound engineering loop¶

Agents follow instructions. We can evolve those instructions over time to get better results from future runs.

The most effective way to improve agent output is to end each significant piece of work with a brief retrospective:

- What worked well in the agent session?

- What needed correction or rework?

- What instructions or context would have prevented those issues?

Capture the answers in the project's CLAUDE.md file, shared libraries, or team documentation. Small improvements compound — each session benefits from every previous one.

This fits naturally into our existing agile retrospective practices. Add "how did we use agents this sprint?" as a standing retro topic and you'll see steady improvement in output quality over time.

Build new habits¶

Many of our engineering instincts are built around the assumption that writing code is expensive. We spend time designing, estimating, and planning to ensure our coding time is spent efficiently. At the micro level, we constantly weigh tradeoffs: is it worth refactoring that function? Writing documentation? Adding a test for this edge case?

Agents disrupt these intuitions. When the cost of trying something drops to near zero, the right response is to try it. Any time your instinct says "don't build that, it's not worth the time," consider firing off a prompt anyway. The worst outcome is you check back later and find it wasn't useful.

This doesn't mean accepting everything an agent produces. It means being willing to explore more options, prototype more aggressively, and invest in quality improvements that previously felt too expensive to justify. The goal is to use cheap code generation to produce better software, not just more of it.

AI should help us produce better code

If adopting coding agents reduces the quality of what you're shipping, something in your process needs fixing. Shipping worse code with agents is a choice. Choose to ship better code instead — with more tests, cleaner architecture, less technical debt, and documentation that actually reflects reality.

Tools & Technologies

Tools & Technologies¶

The tools and technologies we use and recommend. We pick the right tool for the situation rather than enforcing a fixed stack, but these are the ones we have deep experience with and use most frequently.

Claude Code — How we use AI-assisted development to work faster without sacrificing quality.

Azure DevOps — Our primary platform for source control, CI/CD, and project management.

Claude Code¶

Claude Code is a command-line tool from Anthropic that allows developers to delegate coding tasks to Claude directly from their terminal. At CDS, we use Claude Code as a core part of our development workflow.

Why we use it¶

Claude Code allows us to work faster without sacrificing quality. It's particularly effective for:

- Scaffolding projects — generating boilerplate, configuration files, and folder structures

- Writing and editing content — such as the Markdown pages in this very handbook

- Code generation and refactoring — writing implementations from requirements, modernising legacy code

- Debugging — explaining errors, suggesting fixes, and working through complex issues

- Documentation — generating READMEs, ADRs, and inline documentation

- Testing — writing test suites, generating test cases, and running tests to verify changes

- Exploratory prototyping — quickly proving out technical approaches before committing to them

Setting up a project for success¶

Use CLAUDE.md files¶

Every repository should include a CLAUDE.md file at the root. This gives Claude Code the context it needs about the project — tech stack, conventions, folder structure, build commands, and any specific instructions.

A good CLAUDE.md file includes:

- Project overview — what it is, what it does, who it's for

- Tech stack — languages, frameworks, key dependencies

- Repository structure — where things live and why

- Build and test commands — how to install, build, run, and test

- Conventions — naming, branching strategy, coding standards, PR expectations

- Things to avoid — common mistakes, restricted features, known pitfalls

This handbook's own CLAUDE.md is a working example of the approach. It saves time on every session because the agent starts with full context instead of having to discover it.

As your project evolves, keep the CLAUDE.md file current. Outdated instructions are worse than no instructions — they'll actively steer the agent in the wrong direction.

First run the tests¶

Any time you start a new session against an existing project, begin with:

These three words serve several purposes:

- Discovery — the agent finds the test suite and learns how to run it, making it almost certain to run tests again later to check its own work.

- Scale — test harnesses report how many tests exist, giving the agent a sense of the project's size and complexity.

- Mindset — having run the tests, the agent naturally tends to write and extend tests for its own changes.

This is a small habit with outsized impact. It sets the tone for the entire session.

Working effectively with Claude Code¶

Be specific with your prompts¶

The more context you give, the better the output. Include details about the tech stack, coding standards, and the specific outcome you want. A vague prompt produces vague code.

Instead of:

Add a search feature

Try:

Add full-text search to the products API endpoint using the existing ElasticSearch service. Follow the same patterns used in the orders search endpoint. Include unit tests.

Use red/green TDD¶

Test-driven development is a natural fit for coding agents. The pattern is simple:

- Write the tests first — describe the behaviour you want and have the agent write failing tests

- Confirm they fail (red) — this proves the tests are actually exercising something new

- Implement the code to make them pass (green) — the agent iterates until the tests go green

This approach protects against two common agent mistakes: writing code that doesn't work, and writing code that's unnecessary. It also builds a regression suite that catches future breakages.

Every good model understands "use red/green TDD" as shorthand for this entire discipline. It's a powerful four-word instruction.

Have the agent test its own work¶

Automated tests aren't the only form of verification. Have the agent manually exercise the code it's written:

- For APIs: have it make

curlrequests against the running service - For libraries: have it write and run small scripts that import and use the code

- For web UIs: have it use browser automation tools like Playwright to interact with the interface

Issues found through manual testing should then be fixed using red/green TDD, so they end up covered by the permanent test suite.

Iterate¶

Don't expect perfection on the first pass. Use Claude Code iteratively: generate, review, refine. This mirrors how we work as engineers anyway. If something isn't right, describe what's wrong and let the agent correct it.

Keep changes small¶

Agents can produce a lot of code quickly. Resist the temptation to let a single session grow into a massive changeset. Smaller, focused changes are easier to review, easier to test, and easier to revert if something goes wrong.

If a task is large, break it into stages. Have the agent work through them one at a time, verifying each step before moving on.

Review everything¶

Claude Code is a tool, not a replacement for engineering judgement. Always review generated code before committing. Treat it like a pull request from a colleague — it needs the same scrutiny.

Watch for:

- Hallucinated APIs or methods — the agent may reference functions or libraries that don't exist

- Subtle logic errors — code that looks plausible but doesn't handle edge cases correctly

- Security issues — injection vulnerabilities, exposed secrets, overly permissive configurations

- Over-engineering — unnecessary abstractions, unused code, features you didn't ask for

- Style drift — code that doesn't match the project's existing conventions

The agent produces code quickly. Use the time you've saved to review it thoroughly.

Getting started

If you're new to Claude Code, install it via npm: npm install -g @anthropic-ai/claude-code. You'll need a valid API key or Anthropic account to authenticate. Run claude in any repository to start a session.

Azure DevOps¶

Azure DevOps (ADO) is our primary platform for source control, CI/CD pipelines, work item tracking, and project management. We use it across the majority of our engagements.

What we use it for¶

Git repositories¶

All our source code lives in ADO Git repositories. We follow a trunk-based development model with short-lived feature branches and pull requests.

Pipelines¶

We use ADO Pipelines for continuous integration and continuous deployment. Pipelines are defined as YAML files checked into the repository, keeping our build and deployment configuration version-controlled alongside the code.

Boards¶

For work item tracking, we use ADO Boards with a lightweight process. We avoid over-complicating the workflow — typically we use a simple Backlog → In Progress → In Review → Done flow.

Branching strategy¶

We follow trunk-based development:

mainis the primary branch and is always deployable- Feature branches are short-lived (ideally merged within a day or two)

- Pull requests require at least one approval before merging

- Branch policies enforce build validation on PRs

Pipeline standards¶

Every project should have, at minimum:

- A build pipeline that runs on every PR (compile, test, lint)

- A release pipeline that deploys to the target environment on merge to main

- Pipeline definitions stored as YAML in the repo, not configured through the UI

Flexibility

While ADO is our default, we're not dogmatic about it. Some engagements may use GitHub, GitLab, or other platforms depending on the client's existing tooling. The principles above apply regardless of the platform.

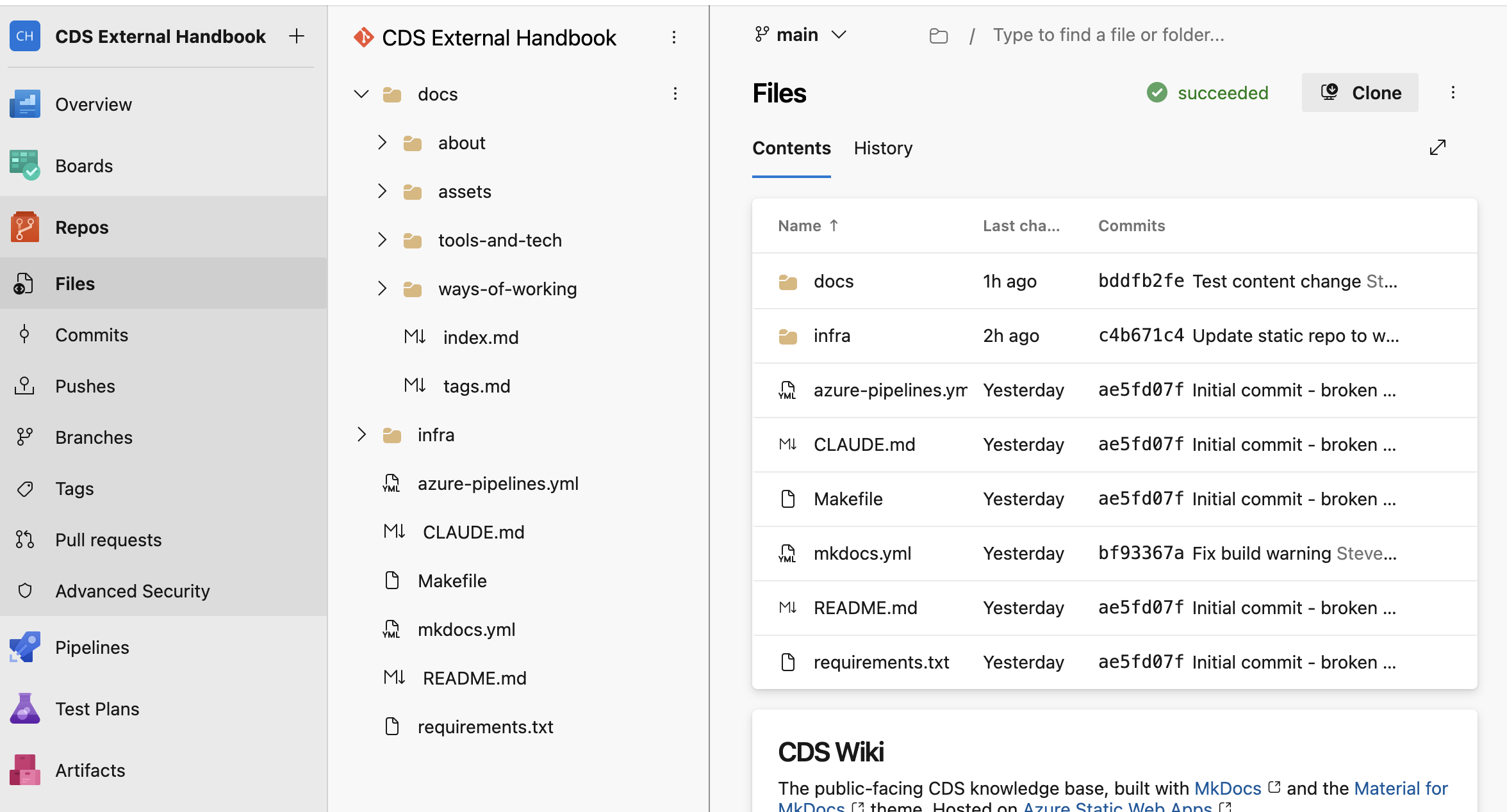

Contributing to the CDS Handbook¶

The CDS Handbook is a shared resource, maintained collaboratively across the company. If you spot something out of date, want to add a new page, or improve existing content, this guide explains how to do it.

All changes go through a pull request (PR) review before appearing on the live site. This keeps the quality and consistency of the handbook high.

Before you start¶

You need:

- An Azure DevOps (ADO) account — everyone at CDS has one

- Access to the repository: CDS External Handbook on ADO

If you cannot access the repository, speak to your line manager.

Branch naming convention¶

Whether you edit via the browser or locally, your branch name must follow this pattern:

Keep the description short (2–4 words, hyphen-separated) and descriptive of your change.

Examples:

| Who | Change | Branch name |

|---|---|---|

| Sarah Jones | Adding a security tools page | contrib/sarah-jones/add-security-tools-page |

| James Smith | Updating the delivery approach | contrib/james-smith/update-delivery-approach |

| Alex Taylor | Fixing typos on the values page | contrib/alex-taylor/fix-typos-values-page |

Making changes via the browser¶

The simplest way to contribute — no software to install.

Editing an existing page¶

-

Open the repository in ADO.

-

Click Files in the left-hand sidebar, then navigate to the

docs/folder and find the file you want to edit.Tip

Each handbook page corresponds to a

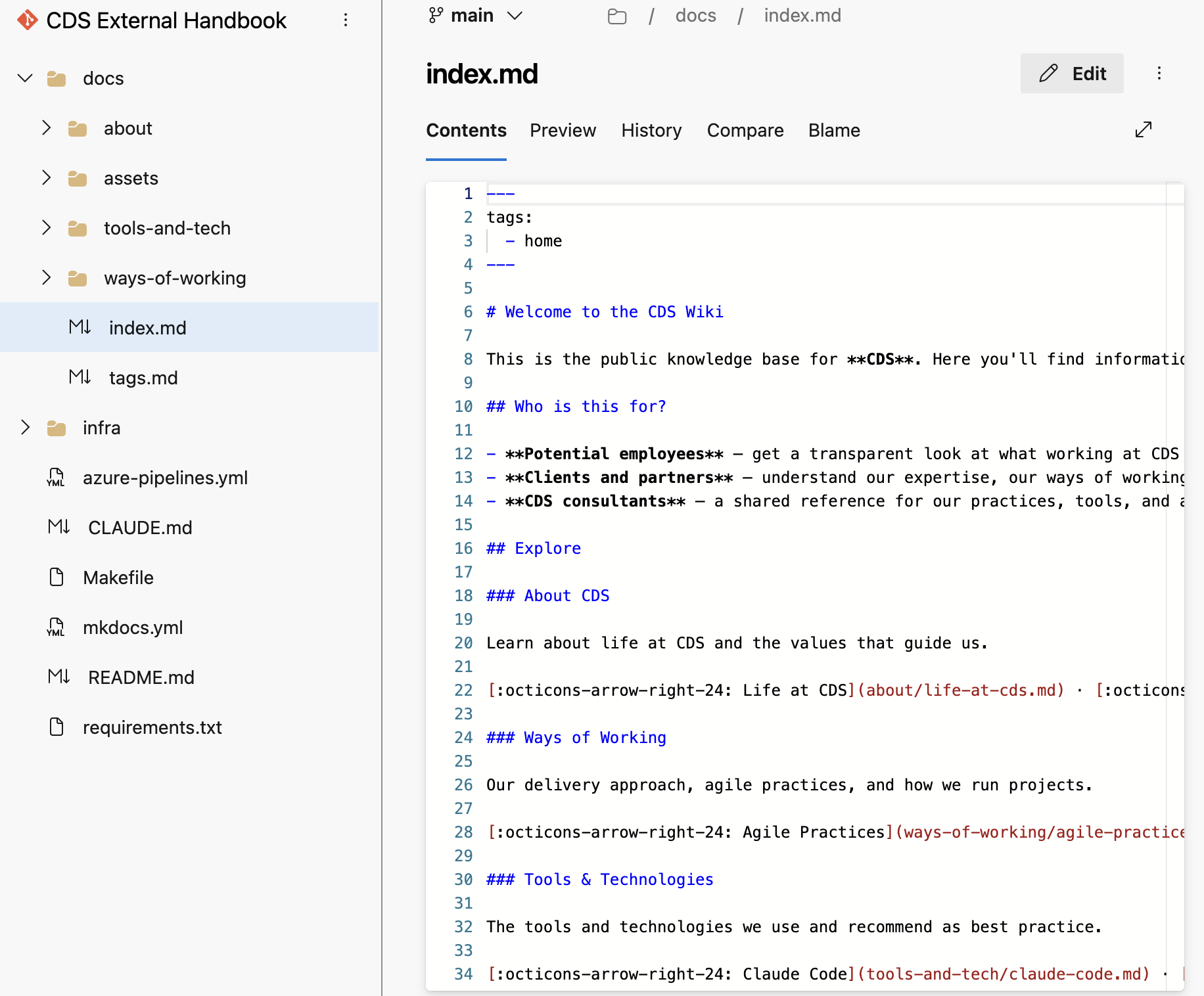

.mdfile indocs/. For example, the Life at CDS page isdocs/about/life-at-cds.md. -

Click the file name to open it, then click the Edit (pencil) icon in the top-right corner.

-

Make your changes in the editor. Refer to the Markdown guide below if you need help with formatting.

-

When you are done, click Commit in the top-right corner.

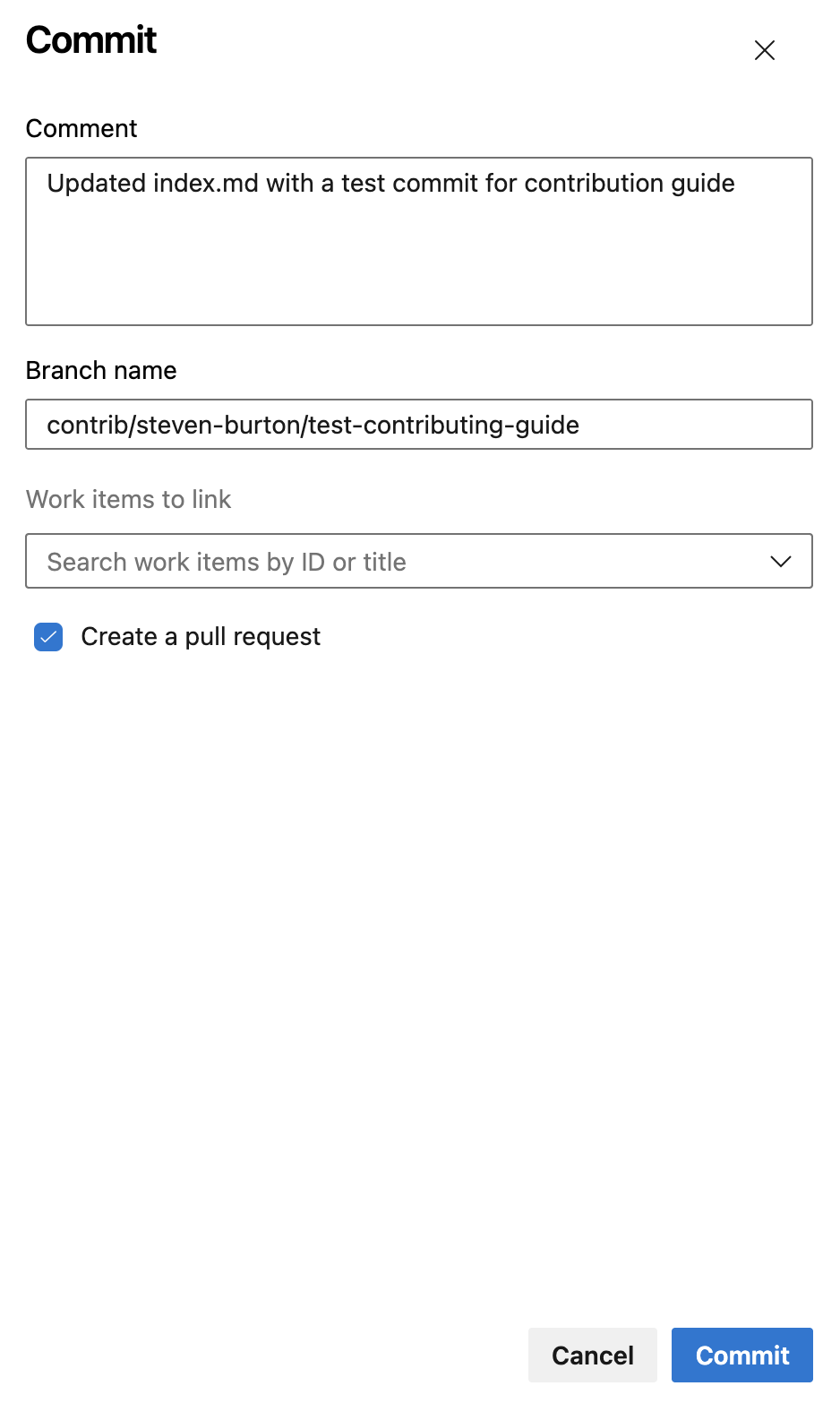

-

In the commit dialog:

- Write a short Comment describing your change (e.g. "Update agile practices — add retrospective section")

- Change the Branch name field from

mainto your new branch name, following the naming convention above - Tick Create a pull request

- Click Commit

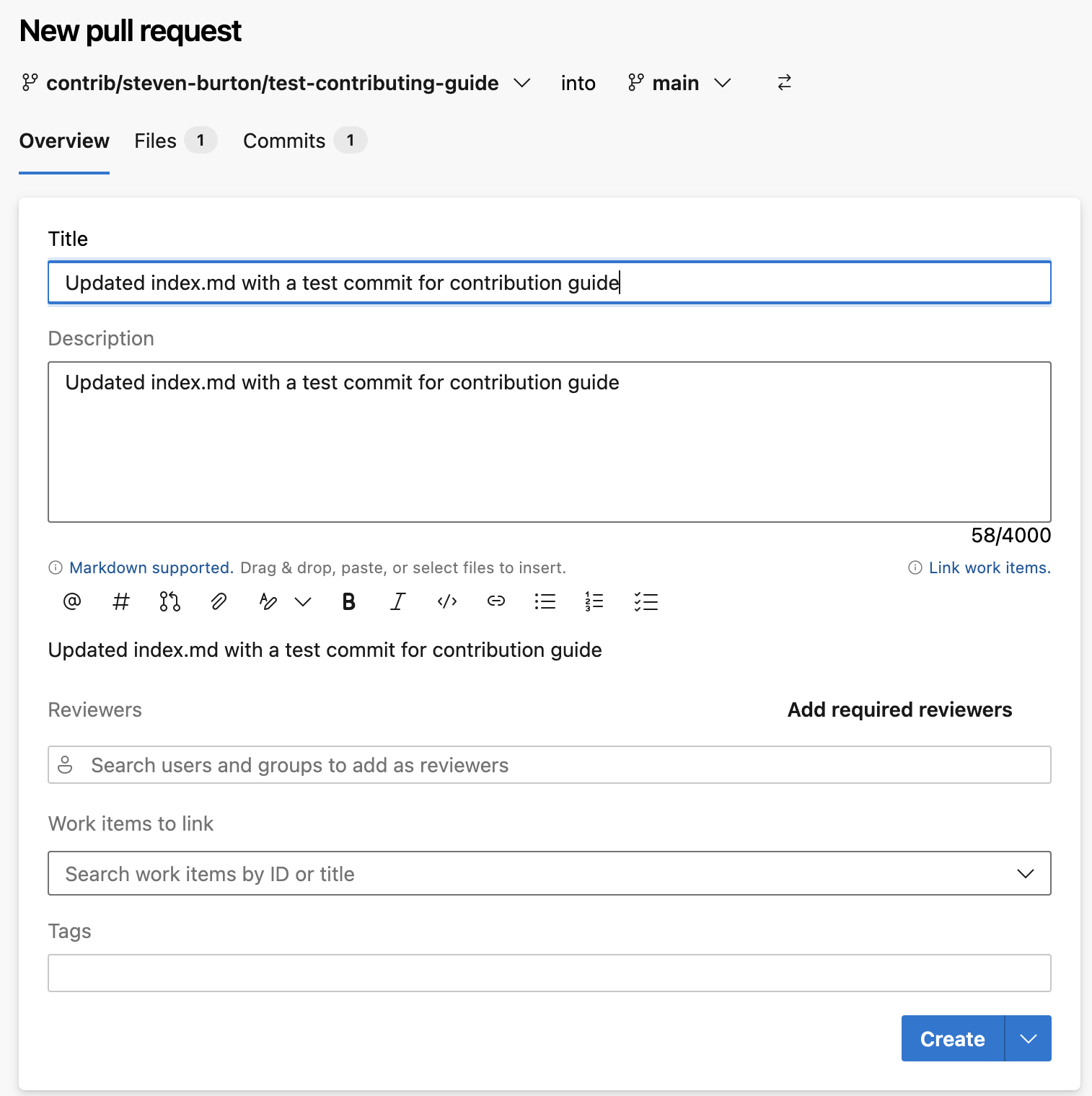

-

This opens the New Pull Request screen. ADO pre-fills the title and description from your commit comment — update them to be clear and descriptive:

- Title — a concise summary of your change (e.g. "Update agile practices — add retrospective section")

- Description — one or two sentences on what you changed and why

- Click Create

Your PR will be reviewed. You will be notified when it has been approved and the changes are live.

Creating a new page¶

Creating a new page requires one additional step — adding it to the site navigation — which the reviewer will handle before merging. You do not need to worry about this.

-

Open the repository and navigate to the relevant subfolder inside

docs/:docs/about/— life at CDS, culture, valuesdocs/ways-of-working/— agile, delivery, methodologiesdocs/tools-and-tech/— tools and technologies

-

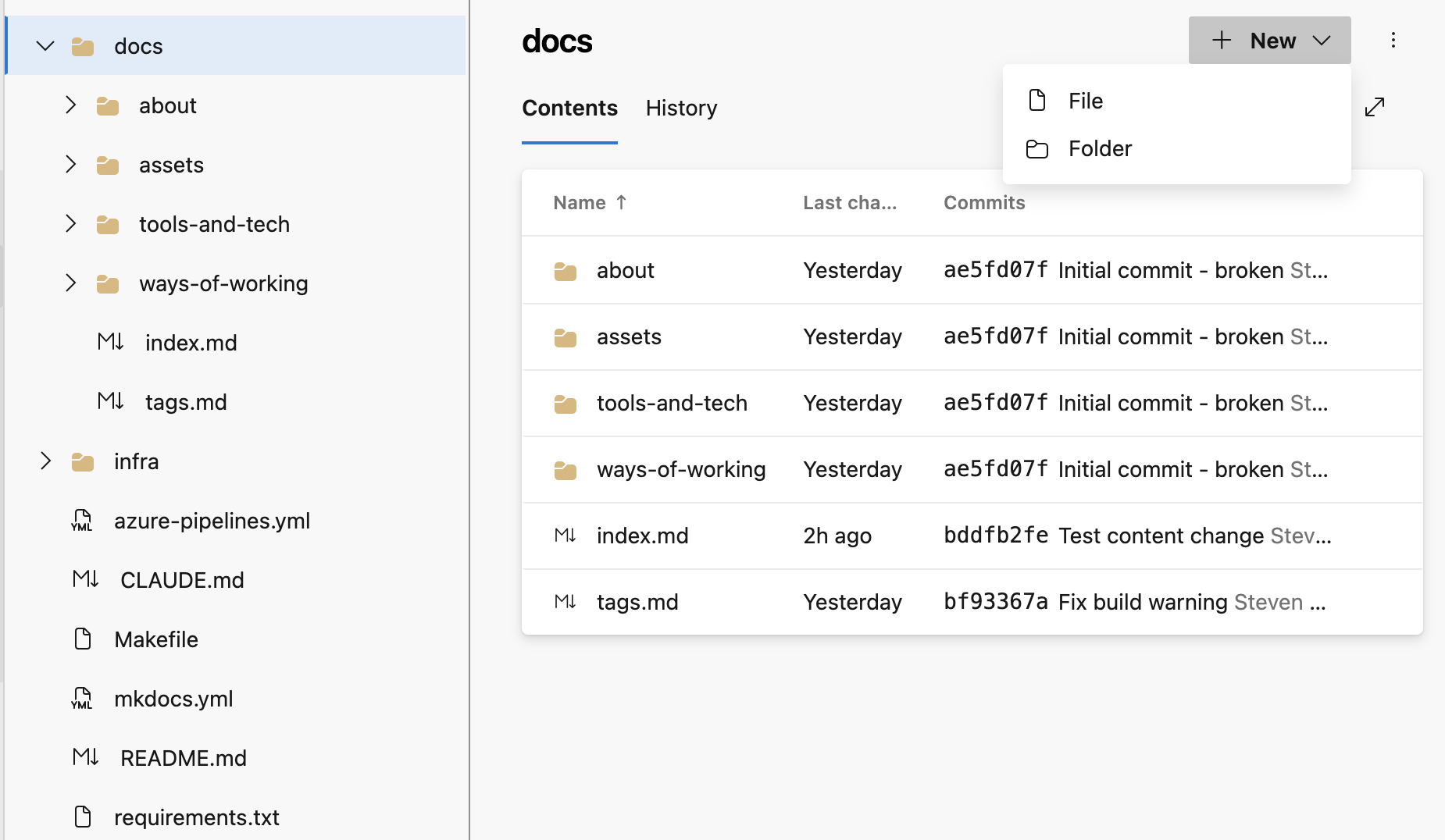

Click + New at the top-right of the file list, then select File.

-

Name your file using lowercase words separated by hyphens, ending in

.md— for example,my-new-page.md. -

Start the file with a front matter block (see Front matter and tags below), then add your content.

-

Commit to a new branch and raise a PR as described in steps 5–7 above.

-

In your PR description, mention that you have created a new page so the reviewer knows to add it to the navigation.

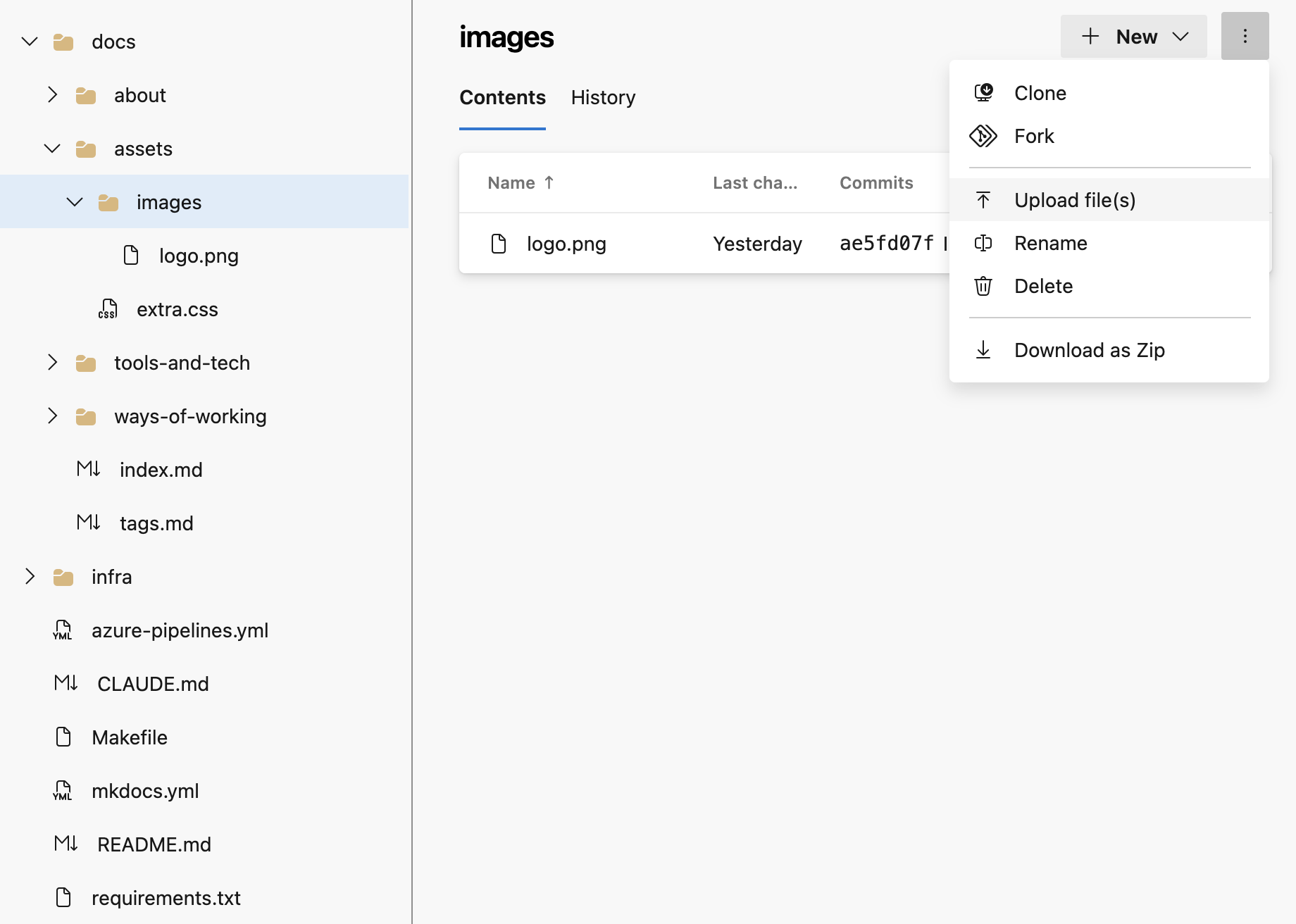

Adding images via the browser¶

Images must be uploaded to the repository before you can reference them in a page.

-

Navigate to

docs/assets/images/in the ADO file browser. -

Click the

⋮three-dot menu at the top-right of the file list, then select Upload file(s). -

In the commit dialog, commit to the same branch you are using for your content changes — not to

main. -

Reference the image in your Markdown file (see Images in the Markdown guide below).

Making changes locally (for developers)¶

If you are comfortable with Git, you can clone the repository, make changes locally, preview the site, and push a branch.

Prerequisites¶

- Git

- Python 3.10 or later and

pip

Workflow¶

# Clone the repository (first time only)

git clone https://cdsdigital.visualstudio.com/_git/CDS%20External%20Handbook

cd "CDS External Handbook"

# Install dependencies

pip install -r requirements.txt

# Create a branch following the naming convention

git checkout -b contrib/your-name/brief-description

# Preview the site locally as you work (auto-reloads on save)

make serve

# When ready, stage and commit your changes

git add docs/your-file.md

git commit -m "Brief description of your change"

# Push your branch to ADO